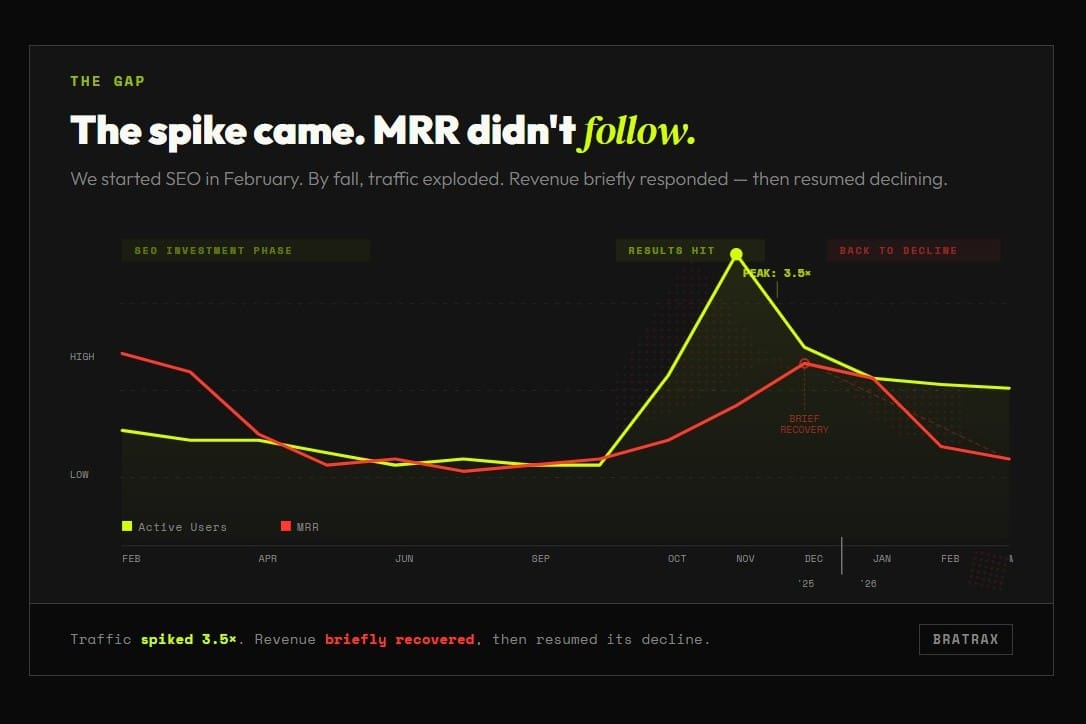

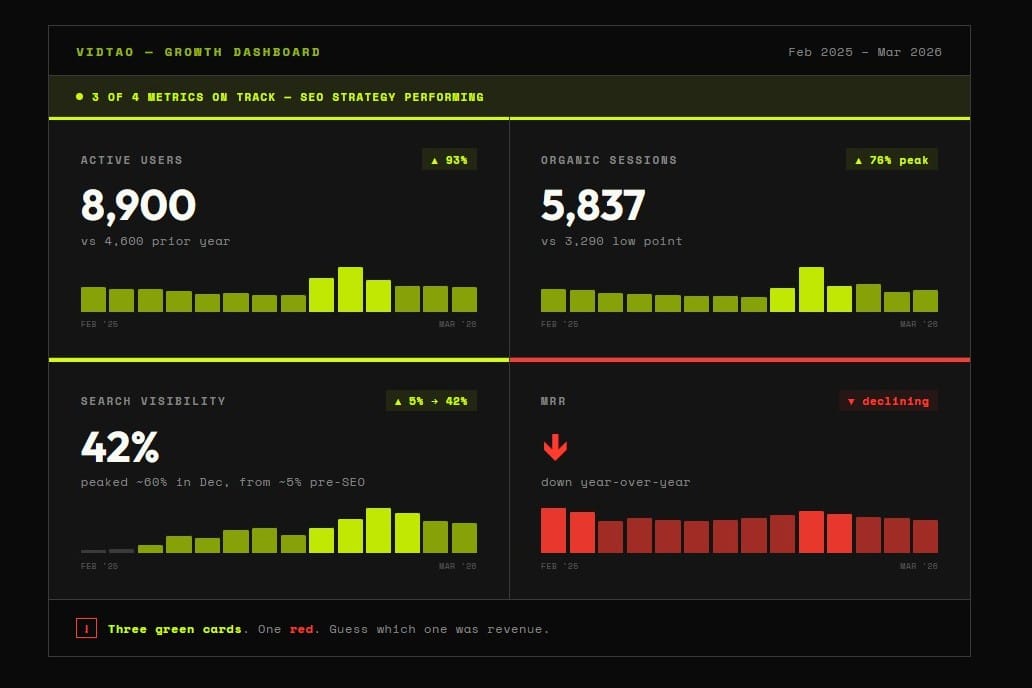

About a year ago, we decided to get serious about VidTao's growth. MRR had been dipping, and when we looked at the data, we noticed it correlated with a dip in website traffic. The logic felt obvious: more visibility, more signups, more revenue. So we went all in.

We overhauled our SEO strategy from scratch. Researched keywords, rebuilt our content processes, made sure SEO was firmly integrated into how we produce and publish everything. This wasn't a side project. It was months of focused effort from multiple people.

And it worked. Traffic grew significantly. Pages getting indexed, organic numbers climbing, the dashboard looking better every month. By any content marketing standard, the strategy was a success.

But MRR didn't follow.

It stabilized for a while. Improved briefly. Then started declining again. And again. Meanwhile, the traffic numbers kept looking fantastic.

This is the part where, in a normal business article, the author says "and then we realized X and fixed it." We're not there yet. We're still in it.

Our current theory is that the real problem is conversion from free trial to paid. The product itself keeps getting better (our paying users stick around, and the feedback from active users is strong). So it's less about the product and more about whether trial users are getting deep enough into it to see what it can actually do for them. That's a different problem than the one we were solving with SEO. We don't have a clean answer yet.

What we do know is this: the SEO investment wasn't a mistake. Those processes are now built into how we operate, and good organic visibility is good organic visibility regardless. But if we're honest about it, we probably should have distributed our effort differently. Less "get more people here" and more "make sure the people who are here are converting." A more balanced approach would have surfaced the real problem sooner.

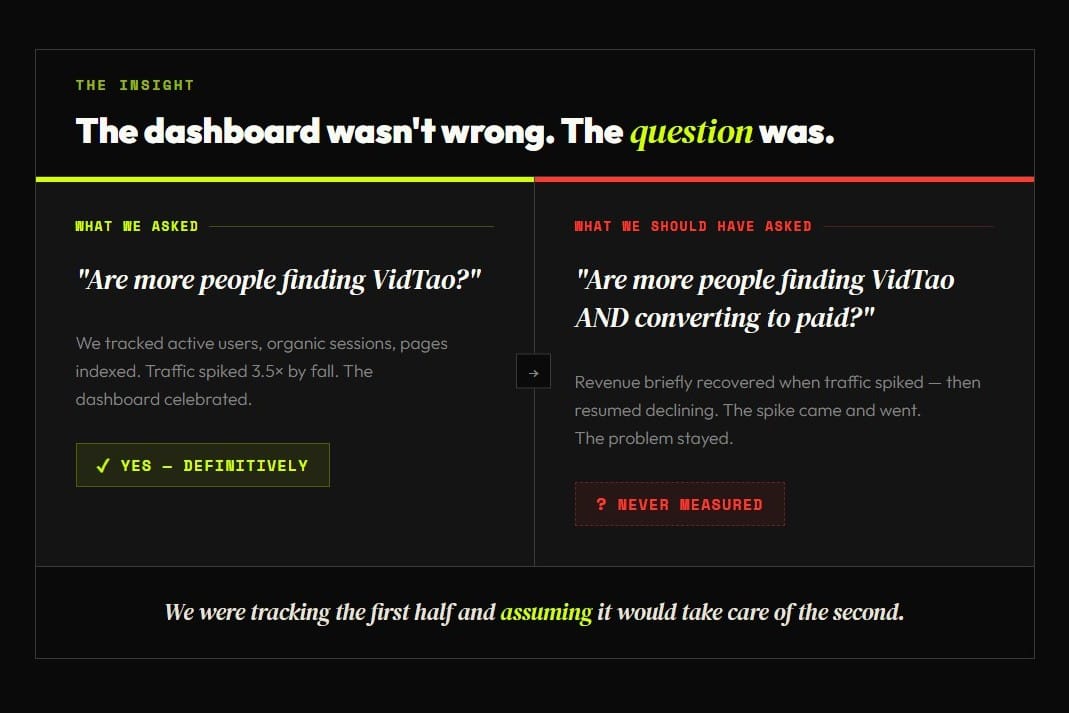

The dashboard wasn't wrong. The question was.

This is the thing that's been nagging at me. At no point did the data lie to us. Traffic really was going up. The SEO metrics really were improving. Every number on the dashboard was accurate.

The problem was the question we were asking. "Are more people finding VidTao?" Yes. Definitively yes. But that was never the question that mattered.

The question that mattered was "are more people finding VidTao AND converting to paid?" We were tracking the first half and assuming it would take care of the second.

When a metric moves in the right direction, it feels like confirmation. You keep pushing. The dashboard rewards you with green arrows and upward trends. And the longer that continues, the harder it is to stop and ask whether the number you're celebrating is actually connected to the outcome you care about.

Why this keeps happening

I wish I could say this was a one-time thing, but honestly, we see versions of this pattern constantly. It's one of the reasons we built Bratrax.

A team picks a metric that feels like it should matter. Traffic, signups, active users, whatever. The metric goes up. Everyone feels good. But nobody mapped the connection between that metric and the business outcome it's supposed to drive. There's an implicit assumption that more traffic means more revenue, or more signups means more growth, and that assumption goes untested because the number on the screen keeps going up.

The fix isn't better dashboards or more data. It's asking a different question before you build anything:

What decision will this metric inform, and what would need to be true for that decision to be a good one?

That's what we do at Bratrax before a single dashboard gets built. We sit down with the team and agree on what matters, how it connects, and what it means. Not the data. The definitions. The relationships. The logic that ties a number on a screen to a decision in a meeting.

If we'd done that exercise for VidTao a year ago, I think we would have caught the gap between traffic and conversion much sooner. The data would have been the same. We just would have been asking different questions about it.

We're doing that work now. And when we have results, we'll share those too. That's the deal with this newsletter: real stories, including the ones where we're still figuring it out.

If this sounds familiar, I'd love to hear your version. Hit reply and tell me what metric your team has been chasing. We read every response, and if something jumps out, we'll share our take on what might be going on. Sometimes a different pair of eyes on the situation is all it takes.

Yuliya is COO at Inceptly, where she spends her days making sure operational reality matches what the dashboards promise.